I keep going on about why software development should consist of empowered cross-functional teams, and a lot of actual experts have written very well – at length – about this, and within manufacturing the Japanese showed why it matters in the 1980s, and the American automotive industry drew the wrong lessons from it, but that is a separate issue. For some light reading on these topics I recommend People Before Tech by Duena Blomstrom and also Eli Goldratt’s The Goal.

Incorrect conclusions from the Toyota Production System

The emergence of Six Sigma was a perfect example of drawing the completely wrong conclusion from the TPS1. In manufacturing, as well as in other processes where you do the exact same thing multiple times you do need to do a few sensible things, like examine your value chain and eliminate waste, so figuring out exactly how to fit a dashboard 20 seconds faster in a car, or provide automated power tools that let the fitter apply the exactly correct torque without any manual settings creates massive value, and communicating and re-evaluating these procedures to gradually optimise further has a direct tie in with value creation.

But transferring that way of working to an office full of software developers where you hopefully solve different problems every single day (or you would use the software you have already written, or license existing software rather than waste resources building something that already exists) is purely negative, causing unnecessary bureaucracy that actually prevents value creation.

Also -the exact processes that have been developed at Toyota or even at its highly successful joint venture with General Motors – NUMMI – were never the success factor. The success factor was the introspection, the empowerment to adapt and inspect processes at the leaf nodes of the organisation. The attempts by GM to bring the exact processes back to Detroit failed miserably. The clue is in the meta process, the mission and purpose as discussed by Richard Pascale’s in The Art of Japanese Management and in Tom Peter’s In Search of Excellence.

The value chain

The books I mentioned in the beginning explain how to look at work as it flows through an organisation as a chain where pieces of work are handed off between different parts of the organisation as different specialists do their part. Obviously there needs to be authorisation and executive oversight to make sure nothing that runs contrary to the ethos of the company gets done in its name, there are multiple regulatory and legal concerns that a company wants to safeguard, and I want to make it clear that I am not proposing we remove those safeguards, but the total actual work that is done needs mapping out. Executives and especially developers rarely have a full picture of what is really going on, as in there can be workarounds created around perceived failings in the systems used that have never been reported accurately.

A more detailed approach that is like a DLC on the Value Stream Mapping process, is called Event Storming, where you gather stakeholders in a room to map out exactly the actors and the information that makes up a business process. This can take days, and may seem like a waste of a meeting room, but the knowledge that come out of it is very real – as long as you make sure not to make this a fun exercise for architects only, but to involve representatives from the very real people involved in these processes day-to-day.

The waste

What is waste then? Well – spending time that does not even indirectly create value. Having people wait for approvals rather than ship them, product creating tickets six months ahead of time that then need to be thrown away because the business needs to go in a different direction. Writing documentation nobody reads (it is the “that nobody reads” that is the problem there, so work on writing good, useful and discoverable documentation, and skip the rest). Having two or more teams work on solving the same problem without coordination – although there is a cost to coordination as well, there is a tradeoff here. Sometimes it is less wasteful for two teams to independently solve the same problem if it leads to faster time to market, as long as the maintenance burden created is minimal.

On a value stream map it becomes utterly painfully clear that you need information before it exists, or that dependencies flow in the wrong direction, and with enough seniority in the room you can make decisions on what matters and with enough individual contributors in the room you can agree practical solutions that make people’s lives easier. You can see handoffs that are unnecessary or approvals that could be determined algorithmically, find ways of making late decisions based on correct data, or find ways of implementing safeguards in a way that does not cost the business time.

As a small cog in a big machine it is sometimes very difficult to know what parts of your daily struggles add or detract value from the business as a whole, and these types of exercises are very useful in making things visible. The organisation is also forced to resolve unspoken things like power struggles so that business decisions are made at a sensible level with clear levels of authority. Especially businesses with a lot of informal authority or informal hierarchies can struggle to put into words how they do things day-to-day, but it is very important that what is documented is the current unvarnished truth, or else it is like you learn repeatedly in The Goal – optimising any other place than the constraint is useless.

But what – why is a handoff between teams “waste”?

There are some unappealing truths in the Goal- e.g. the ideal batch size is 1, and you need slack in the system – but when you think about them, they are true:

Slack in the system

Say for instance, you are afraid of your regulator – with good reason – and you know from bitter experience that software developers are cowboys. You hire an ops team to gatekeep, to prevent developers from running around with direct access to production, and now the ops team relies on detailed instructions form the developers on how to install the freshly created software into production, yet the developers are not privy to exactly how production works. Hilarity ensues and deployments often fail. It becomes hard for the business to know when their features go out, because both ops and dev are flying blind. In addition to this, the ops team is 100% utilised, they are deploying and configuring things all day, so any failed deployment (or, let’s be honest, botched configuration change ops attempts on their own without any developer to blame) always leads to delays, so the lead time for a deployment goes out to two weeks, and then further.

OK, so let’s say we solve that, ops build – or commission – a pipeline that they accept is secure and has enough controls and reliable rollback capabilities to be trusted to hand over to be used by a pair of developers, bosh – we solve the deployment problem, developers can only release code that works, or it will be rolled back without them needing the actual keys to the kingdom, they have a button and observability, that’s all they need. Of course, us developers will find new ways to break production, but the fact remains, rollback is easy to achieve with this new magical pipeline.

Now this state of affairs is magical thinking, no overworked ops team is going to have the spare capacity to work on tooling. What actually tended to happen was that the business hired a “devops team”, which unfortunately weren’t trusted with access to production either, so you might end up with separate automation among ops vs dev (“dev ops team” writes and maintains CI/CD tooling and some developer observability, ops team run their own management platform and liveness monitoring) which does not really solve the problem. The ops team needs time to introspect and improve processes, i.e. slack in the system.

Ideal batch size is 1

Let us say, you have iterations, i.e. the “agile is multiple waterfalls in a row” trope. You work for a bit, you push the new code to classic QA that revise their test plans, and then they test your changes as much as they can before the release. You end up with 60 jira tickets that need QA signoff on all browsers before the release, and you need to dedicate a middle manager to go around and hover behind the shoulders of devs and QA until all questions are straightened out and the various bugs and features have been verified in the dedicated test environment.

A test plan for the release is created, perhaps a dry run is carried out, you warn support that things are about to go down, you bring half the estate down and install updates on the one side of the load balancer, you let the QAs test the deployed tickets. They will try and test as much as they can of the 60 tickets without taking the proverbial, given that the whole estate is currently only serving prod traffic from a subset of the instances, and once they are happy, prod is switched over to the freshly deployed side, and a couple of interested parties start refreshing the logs to see if something bad seems to be happening, as the second half of the system is deployed, the firewall is reset to normal and monitoring is enabled.

So that is a “normal” release for your department, and it requires fairly many people to go to DEFCON 2 and dedicate their time to shepherding the release into production. A lot of the complexity with the release is the sheer size of the changes. If you were deploying a small service with one change, the work to validate that it is working would be minimal, and you would also immediately know what is broken because you know exactly what you changed. With large change sets, if you start seeing an Internal Server Error becoming common, you have no exact clue as to what went wrong, unless you are lucky and the error message makes immediate sense, but unfortunately, if it was a simple problem, you would probably have caught it in the various test stages beforehand.

Now imagine that one month the marquee feature that was planned to be released was a little bit too big and wouldn’t be able to be released in its entirety, so the powers that be decide, hey let’s just combine this sprint with the next one and push the release out another two weeks.

Come QA validation before the delayed release, there are 120 tickets to be validated – do you think that takes twice the time to validate or more? Well, you only get the same three days to do the job, it’s up to the Head of QA to figure it out, but the test plan is longer which makes the release take 10 hours, four hours of which include the bit of limbo while the estate is running on half power.

So yea, you want to make releases easy to roll back and fast to do and rely heavily on automated validation to avoid needing manual verification – but most of all, you want to keep the change sets small. The automation helps that become an easier choice, but you could choose to release often even with manual validation, but it seems to be human nature to prefer a traumatic delivery every two weeks rather than slight nausea every afternoon.

So what are the right conclusions to draw from TPS?

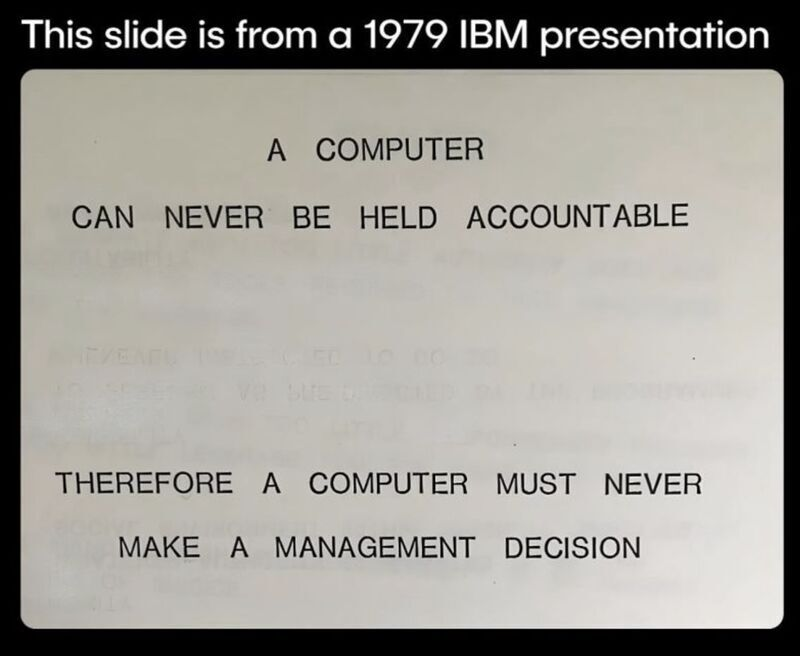

Well, the main thing is go and see, and continuous improvement. Allow the organisation to learn from previous performance, and empower the people to make decision at the level it makes sense, e.g. discussions about tabs vs spaces should not travel further up the organisation than among the developer collective, or some discussions should not be elevated beyond the team. If you give teams accountability on cloud spend, the visibility and the authority to affect change, you will see teams waste less money, but if the cost is hard to attribute and only visible on a departmental level, your developers are going to shrug their shoulders because they do not see how they can effect change. If you allow teams some autonomy on how to solve problems whilst giving them clear visibility on what the Customer – in the agile sense – wants and needs, the teams can make correct small tradeoffs on their level without derailing the greater good. So – basically – make work visible, let teams know the truth about how their software is working and what their users feel about it. Let developers see how users use their software. Do not be afraid to show how much the team costs, so that they can make reasonable suggestions – like “we could automate these three things, and given our estimated running cost, that would cost the business roughly 50 grand, and we think that would be money well spent because a), b) c) […]” or alternatively “that would be cool, but there is no way we could produce that for you for a defensible amount of money, let us look what exists on the market that we can plug into to solve the problem without writing code”.

Everyone at work is a grown-up, so if you think that you are getting unrealistic suggestions from your development teams, consider if you perhaps have hidden too much relevant information from them, and that perhaps if we figure out how to make relevant information easily accessible, we could give everyone a better understanding of not only what is happening right now, but more importantly, what reasonably should happen next. This also works to help upper management understand what each team is doing. If you have internal resistance from this, consider why, because that in itself could explain problems you might be having.

- The initialism TPS stands for Toyota Production System, as you may deduce from the proximity to the headline, but I acknowledge the existience of TPS reports in popular culture – i.e. Office Space. I do not believe they are related. ↩︎